Next-gen processor technologies fuse modular, chiplet-based designs with smarter workloads to push scalability and yield. Heterogeneous architectures mix GPUs, accelerators, and memory on a single package, tailoring computation to workload motifs. The result is dynamic mapping, energy-aware scheduling, and adaptive cooling, guided by neural compilers and evaluation frameworks. Real-world constraints—power, software ecosystems, security—shape both architectures and ecosystems, hinting at rapid, responsible iteration that invites careful scrutiny.

What Are Next-Gen Processor Technologies

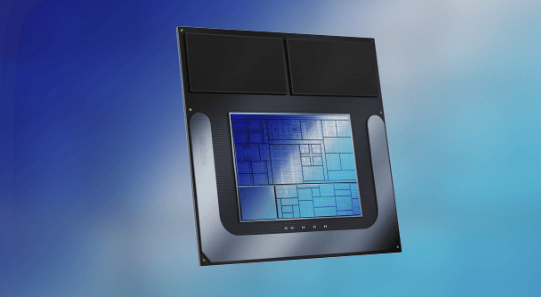

What are next-gen processor technologies? The landscape merges modular design and smarter workloads. Chiplet integration enables diverse functions on a single package, improving scalability and yield. Machine learning accelerators specialize computational motifs, accelerating AI tasks without bloating cores. This approach invites freedom from monolithic silicon, fostering experimentation, optimized power, and rapid iteration while preserving compatibility across ecosystems.

How Heterogeneous Architectures Boost Performance

Heterogeneous architectures combine diverse processing elements—such as general-purpose cores, specialized accelerators, and memory hierarchies—on a single platform, enabling workloads to be mapped to the most suitable unit.

This approach reveals chiplets synergy, where modular blocks optimize throughput and energy.

Performance scales with workload-aware scheduling, while thermal scaling challenges demand careful power–area tradeoffs and adaptive cooling strategies.

See also: Network Virtualization Explained

Evaluating Chiplets, AI Accelerators, and Quantum-Inspired Ideas

The analysis remains detached, seeking objective signals: neural compilers translating models into hardware, and error resilience across heterogeneous paths.

Curious scrutiny reveals tradeoffs, guiding designers toward adaptable architectures that honor freedom and rigorous optimization.

Real-World Implications: From Power to Software Ecosystems

The discussion moves from assessing modular and specialized units to examining how these choices shape real-world outcomes, notably power profiles and software ecosystems.

Real-world implications emerge as architectures trade peaks for consistency, affecting edge cooling strategies and thermal envelopes.

Software tunneling challenges and opportunities influence security, isolation, and portability, inviting developers to navigate governance, performance, and freedom in a rapidly evolving landscape.

Frequently Asked Questions

How Cost-Effective Are Next-Gen Processors in Typical Workloads?

Cost efficiency varies by workload coverage; in typical workloads, next-gen processors often deliver improved performance-per-watt, yet gains depend on optimization, software maturity, and usage patterns. They enable curiosity-driven experimentation while balancing cost considerations and measured efficiency.

What Are Failure Rates and Reliability Concerns?

Failure rates and reliability concerns vary by design and workload; higher-performance chips can exhibit increased thermal and aging effects, while advanced fabrication may mitigate some issues. The analysis emphasizes cautious deployment, monitoring, and robust error-handling for freedom-loving teams.

How Do You Compare Performance Across Architectures?

Researchers note a 15% variance in cross-architecture benchmarks; to compare performance, one considers processor efficiency and memory bandwidth, focusing on workload sensitivity, scaling behavior, and latency. This approach remains curious, analytical, and concise for freedom-minded audiences.

What Training Data Exists for AI Accelerator Benchmarks?

Training data for AI accelerator benchmarks includes curated benchmarking datasets and synthetic ensembles; workload diversity aids robust model evaluation. The analysis notes gaps in real-world applicability, urging transparent provenance and cross-domain validation to enhance benchmark reliability and freedom in exploration.

How Will Software Ecosystems Adapt to New Hardware?

One surprising stat: software ecosystems grow at roughly 20% compound annually as hardware evolves. Software ecosystems adapt by embracing adaptive APIs and energy aware scheduling, enabling flexible optimization, modular abstractions, and performance-portability across heterogeneous accelerators. Curiosity remains essential.

Conclusion

Next-gen processor technologies promise modularity and tailored workloads, enabling smarter resource allocation across heterogeneous units. By fusing chiplets, AI accelerators, and memory within unified packages, these designs aim for efficiency, scalability, and resilience. Yet real-world constraints—power, software ecosystems, security, governance—must guide iteration and adoption. The evolving landscape resembles a living organism, constantly reconfiguring to survive and thrive; curiosity, rigorous analysis, and responsible innovation will determine which architectures reach widespread impact.